Hi @ambepat,

How far did you get with your scenario?

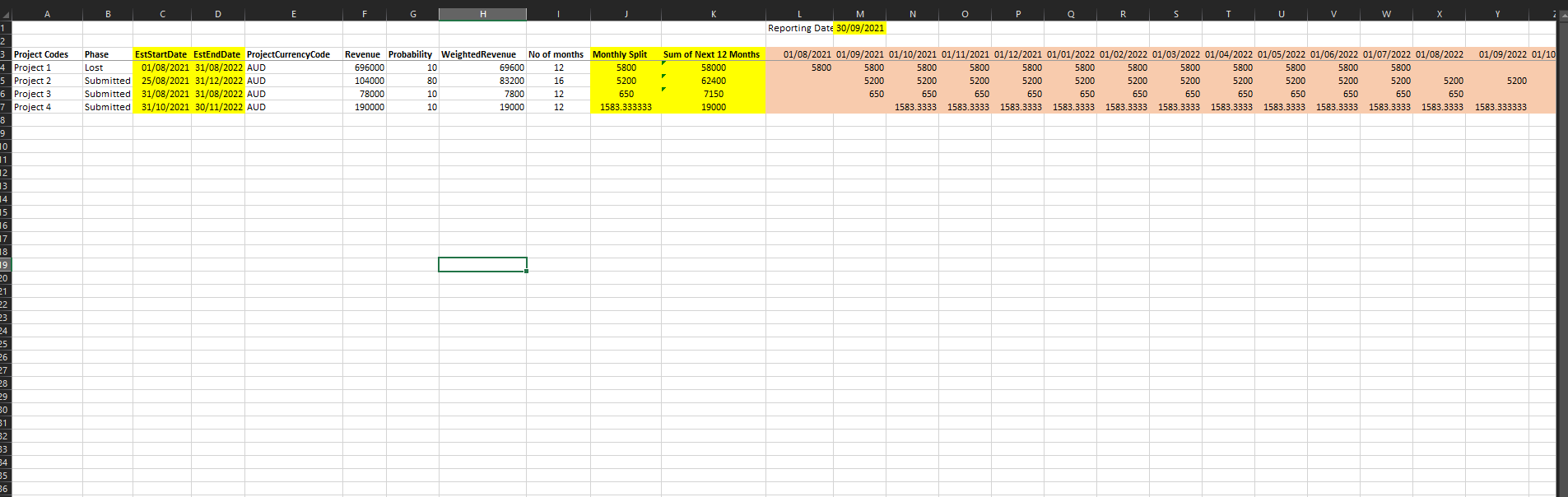

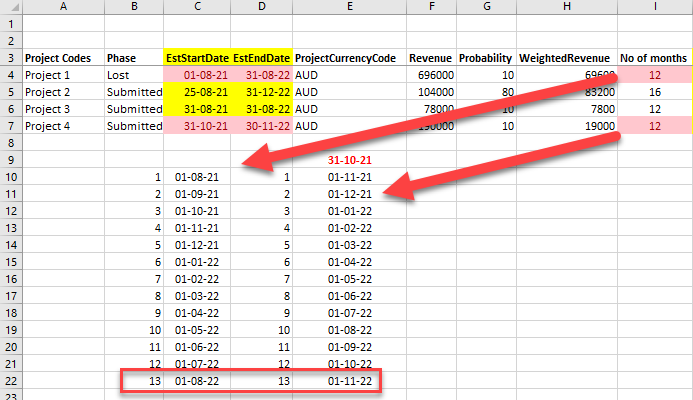

I seem to have a slight difference in opinion with the excel DATEDIFF calc, it should count full months between two dates but its not…

.

Here’s the tMonthsDiff custom function to count full months and get the Dates.

( sDate as date, eDate as date ) =>

Table.SelectRows(

Table.Group(

Table.ExpandRecordColumn(

Table.AddColumn(

Table.TransformColumnTypes( Table.FromColumns( { List.Dates( sDate, Number.From( eDate - sDate )+1, Duration.From(1)) }, { "Date" } ), {{"Date", type date }}),

"Record", each [ DaysInMonth = Date.DaysInMonth([Date]), StartOfMonth = Date.StartOfMonth([Date]) ] ),

"Record", {"DaysInMonth", "StartOfMonth"} ),

{"StartOfMonth"}, {{"Count", each Table.RowCount(_), Int64.Type}, {"DaysInMonth", each List.Max([DaysInMonth]), type number}}),

each [Count] = [DaysInMonth] )

and here’s the sample query, Note that due to the differences in Number of Months the figures are off - you will have to define some logic to deal with that.

let

Source = Table.FromRows(Json.Document(Binary.Decompress(Binary.FromText("fY5NC8IwDIb/ivTcQpJ+rDsKHj0I4mn0ou6gIANX/79N61w3wR6SJ0l5eLtOHJ7Dvb/EDQop9sMYUwNU4BXxRhekhNvTLlXXOgBIgDBNPPAH6zNzAxHkrObj8XV+3GLsr4nJ1n6k2o9git/noqn4HYsLOzIrv1759Z/8ja/iN35O72zeoF26za8b4eMGhbjI3kIlz9NkR+u11Pl9TyG8AQ==", BinaryEncoding.Base64), Compression.Deflate)), let _t = ((type nullable text) meta [Serialized.Text = true]) in type table [#"Project Codes" = _t, Phase = _t, EstStartDate = _t, EstEndDate = _t, ProjectCurrencyCode = _t, Revenue = _t, Probability = _t, WeightedRevenue = _t, #"No of months" = _t, #"Monthly Split" = _t, #"Sum of Next 12 Months" = _t]),

ChType = Table.TransformColumnTypes(Source,{{"EstStartDate", type date}, {"EstEndDate", type date}, {"Revenue", Int64.Type}, {"Probability", Int64.Type}, {"WeightedRevenue", Int64.Type}, {"No of months", Int64.Type}, {"Monthly Split", Int64.Type}, {"Sum of Next 12 Months", Int64.Type}}),

InvokedCF = Table.AddColumn(ChType, "GetInfo", each tMonthsDiff([EstStartDate], [EstEndDate])),

AddRecord = Table.AddColumn(InvokedCF, "Custom", each let WRev = [WeightedRevenue] in

[ Monthly Split = WRev / Table.RowCount([GetInfo]), Next 12 Months = Table.RowCount( Table.SelectRows( [GetInfo], each [StartOfMonth] >= Date.EndOfMonth(Date.From(DateTime.FixedLocalNow())) and [StartOfMonth] <= Date.AddMonths( Date.StartOfMonth(Date.From(DateTime.FixedLocalNow())), 12) )) * [Monthly Split] ] ),

ExpandRecord = Table.ExpandRecordColumn(AddRecord, "Custom", {"Monthly Split", "Next 12 Months"}, {"Monthly Split2", "Next 12 Months"}),

ExpandTable = Table.ExpandTableColumn(ExpandRecord, "GetInfo", {"StartOfMonth"}, {"StartOfMonth"})

in

ExpandTable

.

Here’s your sample file:

eDNA - Pipeline scenario.pbix (29.9 KB)

I hope this is helpful