Hi Experts,

I am doing the NLP course of python in power bi which is great source to enhance the skill. since in my work i have lot of data related to survey.

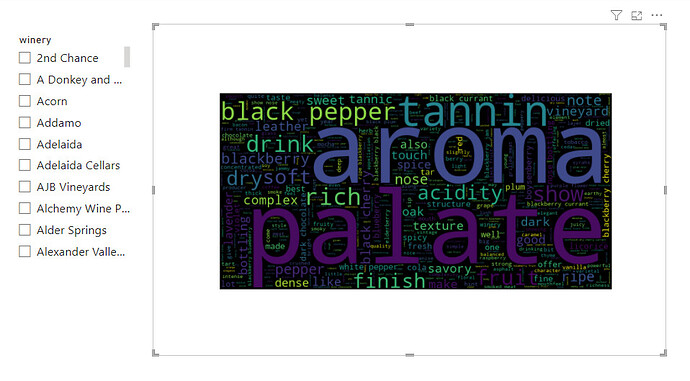

I just wanted to ask how to avoid the blank space in around the word cloud.

i tried to adjust the height and width and still blank spaces comes.

Can someone please assist.

attached pic for reference.

and below code of Python is running in power bi:

The following code to create a dataframe and remove duplicated rows is always executed and acts as a preamble for your script:

dataset = pandas.DataFrame(country, description, designation, points, price, province, region_1, region_2, taster_name, taster_twitter_handle, title, variety, winery)

dataset = dataset.drop_duplicates()

Paste or type your script code here:

#Load the essential Libraries

import pandas as pd

import numpy as np

import nltk

from nltk.corpus import stopwords

from nltk.tokenize import word_tokenize

from nltk.stem import WordNetLemmatizer

from nltk import ngrams

import seaborn as sns

import matplotlib.pyplot as plt

from collections import Counter

from wordcloud import WordCloud

from PIL import Image

Create a data cleaning Function to remove stop words, Punctuation and Lemmatize

def big_clean_text(sentence):

sentence = " ".join(sentence)

new_tokens = word_tokenize(sentence)

new_tokens = [t.lower() for t in new_tokens]

new_tokens = [t for t in new_tokens if t not in stopwords.words(‘english’)]

#new_tokens = [t for t in new_tokens if t not in bad_words]

new_tokens = [t for t in new_tokens if t.isalpha()]

lemmatizer = WordNetLemmatizer()

new_tokens = [lemmatizer.lemmatize(t) for t in new_tokens]

counted = Counter(new_tokens)

counted_2 = Counter(ngrams(new_tokens,2))

counted_3 = Counter(ngrams(new_tokens,3))

word_freq = pd.DataFrame(counted.items(),columns = [‘word’,‘frequency’])

bi_gram_freq = pd.DataFrame(counted_2.items(),columns = [‘bi-gram’,‘frequency’])

tri_gram_freq = pd.DataFrame(counted_3.items(),columns = [‘Tri-gram’,‘frequency’])

return new_tokens

Run the function

tokens = big_clean_text(dataset[‘description’])

joining all the words

bag_of_words = " ".join(tokens)

Creating Wordcloud

plt.figure(figsize=(16,9))

plt.axis(‘off’)

word_cloud = WordCloud(width=800, height=400, max_words=500, stopwords=[‘wine’,‘syrah’,‘flavor’]).generate(bag_of_words)

plt.imshow(word_cloud)

plt.show()