Hi @LASHBURN

The best approach would be to do this in the query editor.

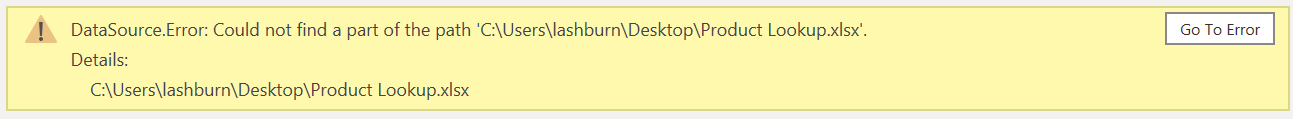

Please find the attached solution in Excel.

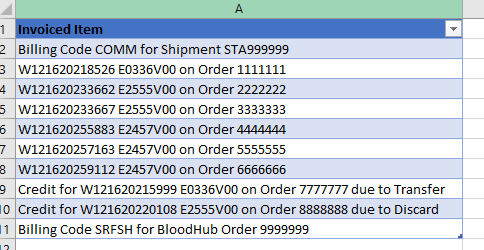

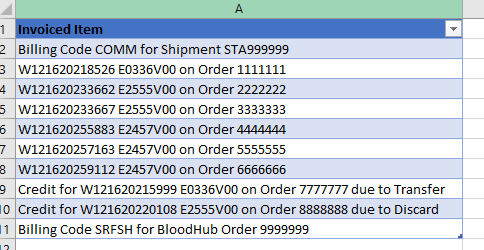

I copied your data from Pbi to Excel.

Product Result.xlsx (21.8 KB)

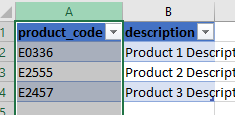

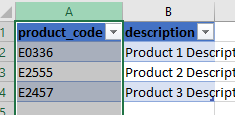

Example of raw data:

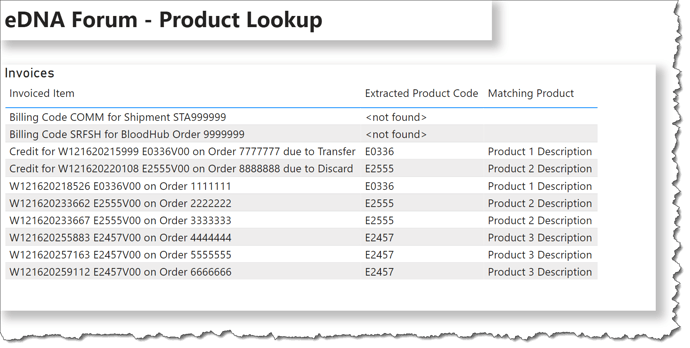

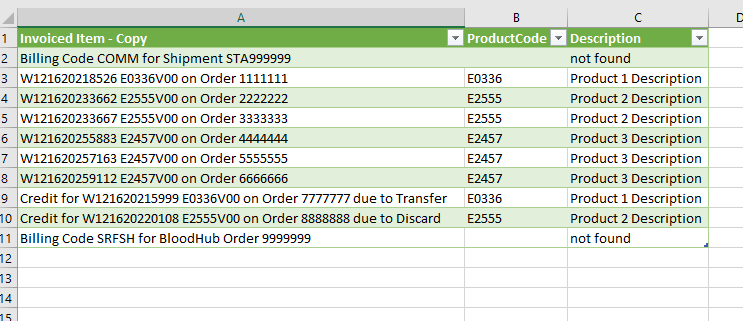

Solution:

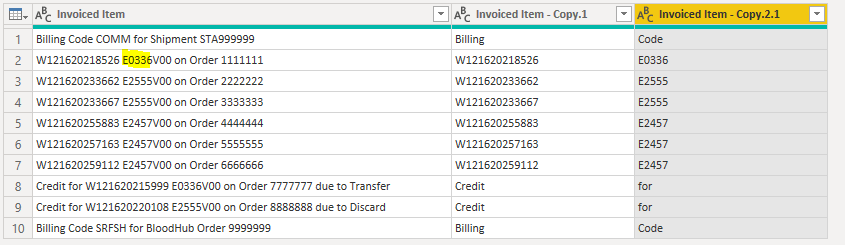

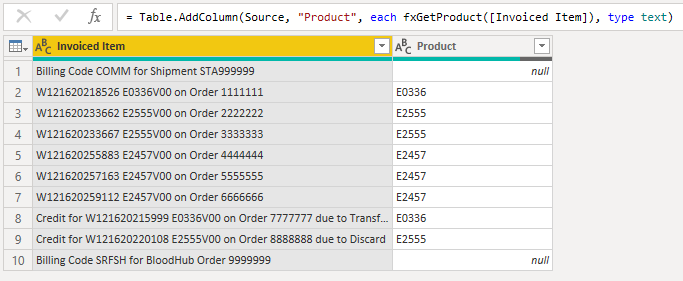

To get the same result as @Greg I split the column by delimeter and then I used Text.Middle formula.

The last step was to do a vlookup betwen two tables.

Please see below code:

let

Source = Excel.CurrentWorkbook(){[Name=“Table2”]}[Content],

#“Changed Type” = Table.TransformColumnTypes(Source,{{“Invoiced Item”, type text}}),

#“Duplicated Column” = Table.DuplicateColumn(#“Changed Type”, “Invoiced Item”, “Invoiced Item - Copy”),

#“Split Column by Delimiter” = Table.SplitColumn(#“Duplicated Column”, “Invoiced Item”, Splitter.SplitTextByDelimiter(“E”, QuoteStyle.Csv), {“Invoiced Item.1”, “Invoiced Item.2”}),

#“Changed Type1” = Table.TransformColumnTypes(#“Split Column by Delimiter”,{{“Invoiced Item.1”, type text}, {“Invoiced Item.2”, type text}}),

#“Added Custom” = Table.AddColumn(#“Changed Type1”, “Custom”, each Text.Middle([Invoiced Item.2],0,4)),

#“Changed Type2” = Table.TransformColumnTypes(#“Added Custom”,{{“Custom”, type text}}),

#“Added Custom1” = Table.AddColumn(#“Changed Type2”, “ProductCode”, each “E”&[Custom]),

#“Removed Columns” = Table.RemoveColumns(#“Added Custom1”,{“Invoiced Item.1”, “Invoiced Item.2”, “Custom”}),

#“Added Custom2” = Table.AddColumn(#“Removed Columns”, “Description”, each if [ProductCode] = null then “not found” else Product{[productcode=[ProductCode]]}[description])

in

#“Added Custom2”

I hope that helps.

Please have a look at the file.

Thank you,

Matty